Top SQL Interview Concepts and Questions to Sail through Interviews

Categories:

Written by:

Written by:Nathan Rosidi

Be ready for the most common SQL interview questions and concepts tested in a job interview.

As you already know, SQL is the most used tool in data science. But not only in data science. Whether you’re a programmer, data analyst, reporting specialist, database designer, or admin, you can count on being asked about SQL in your job interview.

The purpose of such questions is to check whether the candidate can write a code on a level required for his or her job. Of course, different job positions require different types and levels of SQL knowledge. However, there are some concepts that are a must-know for anyone using SQL. We’ll cover those concepts by showing you the most popular questions (and answers!).

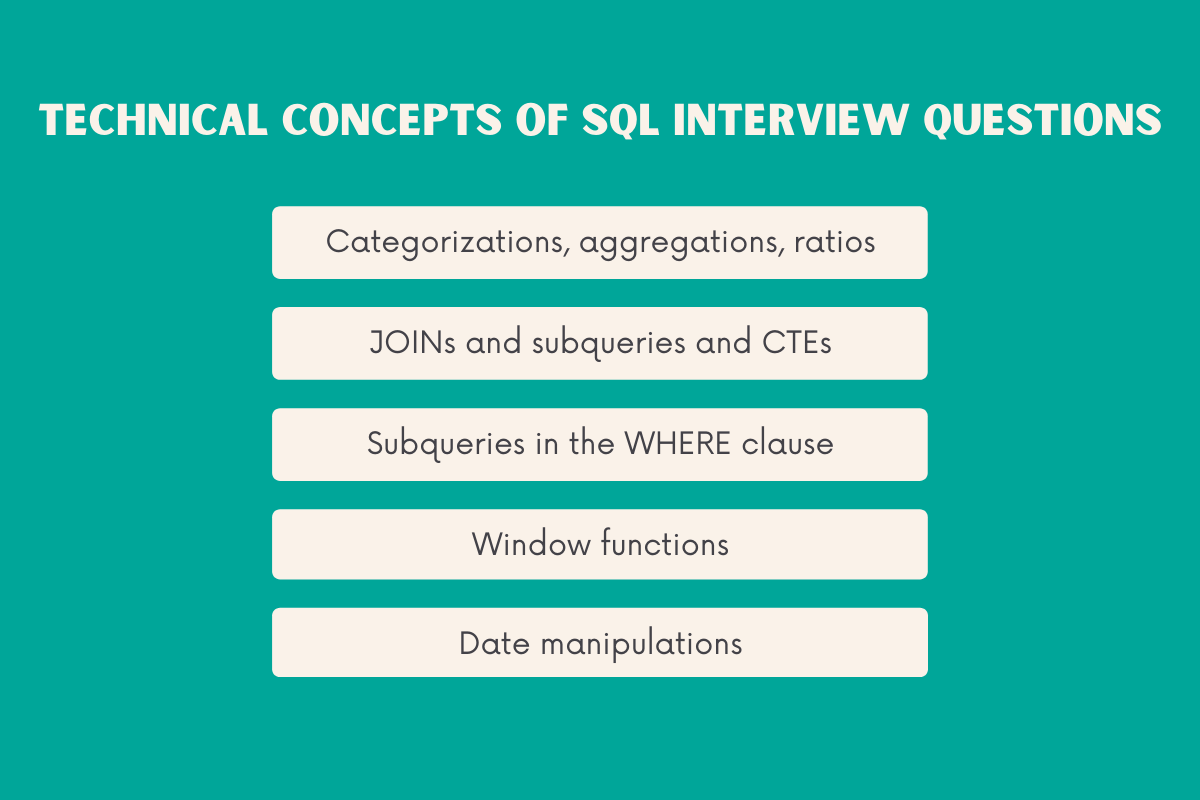

Technical Concepts of SQL Interview Questions

This guide doesn’t cover everything that can come up in the SQL interviews. But it can reasonably prepare you for the most SQL interviews and SQL interview questions. Use this guide to prepare for the complex questions and practice some advanced SQL concepts that your future employer could test. Practice doesn’t make perfect, but it sure makes you more confident in your knowledge. And confidence shouldn’t be underestimated in the interviews. Also, use this guide to make yourself familiar with how companies approach SQL interviews.

The technical SQL concepts we’re going to talk about are:

- Categorizations, aggregations, ratios

- JOINs and subqueries and CTEs

- Subqueries in the WHERE clause

- Window functions

- Date manipulations

1. Categorizations & Aggregations

Even though these are two different concepts, they’re usually tested together in one coding interview question. This concept is probably the most commonly tested concept in SQL interviews, especially in the beginning rounds.

Just to make sure we’re on the same page, let me guide you shortly through the meaning of every concept.

By categorizations, we mean CASE statements in SQL.

Aggregations relate to using the aggregate functions in SQL. The ones like SUM(), AVG(), COUNT(). Aggregation is tested almost without exception and is usually applied after the categorization takes place.

To know how to categorize and aggregate data in SQL is a very important concept to know. When you’re working with data, you’re usually working with a large amount of data. Your task is usually to transform this data into something meaningful that can be used by other people other than data experts. Categorization helps you to organize data in a certain way. And then you can use it to perform some calculations. Such as summing data, finding averages, min and max values, etc. That’s what aggregation does. These are basic calculations but used whenever you want to create some decision-making insights.

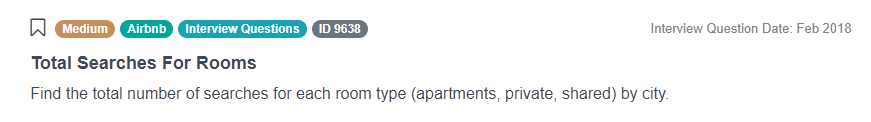

Here’s a question from Airbnb:

“Find the total number of searches for each room type (apartments, private, shared) by city.”

Answer: To answer the question, you need to use the table airbnb_search_details.

SELECT city,

COUNT(CASE

WHEN room_type ILIKE '%apt%' THEN id

ELSE NULL

END) apt_count,

COUNT(CASE

WHEN room_type ILIKE '%private%' THEN id

ELSE NULL

END) private_count,

COUNT(CASE

WHEN room_type ILIKE '%shared%' THEN id

ELSE NULL

END) shared_count

FROM airbnb_search_details

GROUP BY 1To answer this SQL interview question, you need to know well how to use COUNT() and CASE statement. This solution is written in PostgreSQL, so we used its ILIKE keyword, which is not a standard SQL. This is the same as LIKE, but it’s not case-sensitive.

2. JOINs & Subqueries & CTEs

The second technical concept of SQL interviews questions is, again, actually three technical concepts. Those are SQL JOINs, subqueries, and CTEs. Subqueries and CTEs are rather similar in their purpose (with several distinctions), and usually, either of them can be used to achieve the same result. JOINs are, of course, used whenever you’re getting the data from more than one table. Which is always, unless you’re writing some really basic SQL code. And JOINs are used within subqueries and CTEs too.

JOINs are unavoidable when working with data. Simply because the database definition itself calls for using JOINs. In databases, data is always organized in multiple tables. So whenever you need to use data from the database, you’ll need to connect two or more tables to get the data you need. Subqueries and CTEs are not much different; they can be used instead of JOINs. But when you’re writing complex queries, subqueries and CTEs are often easier to use. It’s because they break down the query into logical parts, which are much easier to read than multiple JOINs. This is especially beneficial when you have a complex SQL code to maintain. Subqueries additionally allow you to use the result of the main SELECT statement in a subquery. CTEs even will enable you to reference CTE itself, which you can’t do with the subqueries.

Here’s one example from Google:

“There are two tables with user activities. The 'google_gmail_emails` table contains information about emails being sent to users. Each row in that table represents a message with a unique identifier in the `id` field. The `google_fit_location` table contains user activity logs from the Google Fit app. There is no primary key, however, unique rows that represent `user sessions` are created using a set of columns: user_id, session, step_id.

Find the correlation between the number of emails received and the total exercise per day. The total exercise per day is calculated by counting the number of user sessions per day.”

Answer: To answer the question, you need to use the tables google_gmail_emails, google_fit_location

SELECT corr(COALESCE(n_emails :: NUMERIC, 0), COALESCE(total_exercise :: NUMERIC, 0))

FROM

(SELECT to_user,

DAY,

COUNT(*) AS n_emails

FROM google_gmail_emails

GROUP BY to_user,

DAY) mail_base

FULL OUTER JOIN

(SELECT user_id,

DAY,

COUNT(DISTINCT session_id) AS total_exercise

FROM google_fit_location

GROUP BY user_id,

DAY) loc_base ON mail_base.to_user = loc_base.user_id

AND mail_base.DAY = loc_base.DAYAs you can see, this query is written using the subquery and OUTER JOIN. If you want, you can also re-write it by using the CTE. You also have to know the aggregate functions and a function that calculates the correlation to solve this SQL interview question. What’s being tested is if you can use two sources of data, aggregate data on a required level, and get only one number as a result.

3. Subqueries in the WHERE Clause

Speaking of subqueries, they can be found in the FROM clause, as in the example above. But they can also be found in the WHERE clause, which can be rather slippery for some people.

Using the WHERE clause is helpful when you need to filter data. Using the subquery in the WHERE clause allows you not only to filter data but also do it according to criteria or data that is not explicitly stated in the data you have.

The question from Yelp is a good example:

“Find the business and the review_text that received the highest number of 'cool' votes.

Output the business name along with the review text”

Answer: To answer this question you need to use the table yelp_reviews.

SELECT business_name,

review_text

FROM yelp_reviews

WHERE cool =

(SELECT max(cool)

FROM yelp_reviews)The solution above uses the subquery to get data with the maximum cool votes. The maximum is not something you have ready in your data, so you have to find it to return the business_name and review_text in your result. Why not solve this SQL problem another way? For example, maybe you could get the same result if you just sorted data descendingly and then select the top row. Would that get you the same result? Yes, if you’re lucky, but not in this case. If several businesses have the same (top) number of cool votes, you would get only one of them and miss all others. That way, you would fail this SQL interview question because the correct result returns two businesses, which both have the highest number of cool votes.

4. Window Functions

To sail through the job interview, you’ll also need to know the window functions in SQL. They are heavily utilized in working with data.

The window functions can be seen as some sort of advanced aggregate functions. Like aggregate functions, they allow you to aggregate data. But they also do it in a way that the aggregation is not done in a single row; window functions can aggregate data so that every single stays visible, and the aggregate results are shown in the additional columns.

For example, you could use the RANK() function the rank all the rows and choose according to which column. But you can also do it not for the entire data; you can rank the rows within the certain subgroup. Let’s say, you have data on employees salaries. You can, of course, rank the employees from the highest to the lowest paid in general. But windows function also allows you to do that, for example, for every department separately too quite easily. That way you’ll have overall and departmental ranking in the same result.

You can also use NTILE function to create groups in data by. For example, if your company has a that is sold daily. With the NTILE function, you can group the monthly sales into, let’s say, groups of the first ten days in a month, the second ten-day, and the final then days.

You can do all sorts of other things with other aggregate functions if you use them as window functions. They allow you to get the data from any previous or following row. That way you can calculate running total, moving averages, differences between different periods (such as monthly/quarterly/yearly revenue differences), etc. Again, you can do that not only on the overall data, but according to subgroups you define when you define a window. That’s why they’re called window functions.

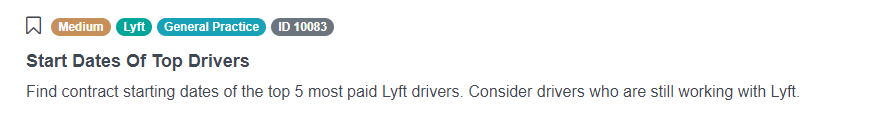

To see how the window functions can be used, here’s one Lyft question:

“Find contract starting dates of the top 5 most paid Lyft drivers. Consider drivers who are still working with Lyft.”

Answer: To answer this question, you should use the table lyft_drivers.

SELECT start_date

FROM

(SELECT start_date,

rank() OVER (ORDER BY yearly_salary DESC)

FROM lyft_drivers

WHERE end_date IS NULL

ORDER BY yearly_salary DESC) sq

WHERE rank <=5This one’s not complicated. However, it manages to test you on window functions, RANK() in this case. This function will allow you to rank the drivers first and then implement other criteria to get you the desired result. Besides that, you’ll also have to use the subquery.

We also suggest checking out Window Functions in SQL.

5. Date Manipulations

The last technical concept of SQL interview questions is date manipulations. This one is always asked because everyone in data analysis works with dates and analyzes time series. This is one of the main jobs for a data analyst: get the raw data and aggregate it by weeks, months, or years. To do that, you’ll need to know how to manipulate dates.

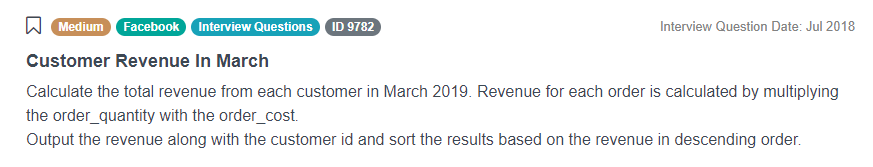

One such question is asked by Facebook:

“Calculate the total revenue from each customer in March 2019. Revenue for each order is calculated by multiplying the order_quantity with the order_cost.

Output the revenue along with the customer id and sort the results based on the revenue in descending order.”

Answer: To answer this question, you’ll have to use the table orders.

SELECT cust_id,

SUM(total_order_cost) AS revenue

FROM orders

WHERE EXTRACT('MONTH'

FROM order_date :: TIMESTAMP) = 3

GROUP BY cust_id

ORDER BY revenue DESCTo solve this SQL interview question, you’ll need to extract the month from the order_date to get the customers from March 2019. The code is not too difficult; it tests some simple aggregation, ordering, and filtering of data. You probably know that already, so you just need to know some Date/Time functions, such as EXTRACT().

Find more Advanced SQL interview questions here.

Conclusion

Without knowing these five technical concepts of SQL interview questions, you probably won’t fare well in the SQL job interviews. There are some simple and some more complex questions that can ask you these concepts. Whatever it is, make sure you’re familiar with them.

Make sure you practice them enough and go through other questions on StrataScratch.

. . .

Originally published on https://cult.honeypot.io

Share